Based on materials from The Verge

As artificial intelligence is used more and more to make decisions in our lives, developers are faced with the problem of how to make it more emotionally savvy. This means automating the solution of a number of emotional tasks that arise naturally for a person – for example, look at a person's face and understand how he is feeling.

To achieve this, tech companies such as Microsoft, IBM, and Amazon offer what they call 'emotion recognition algorithms', defining how they feel people based on facial analysis. For example, if someone has furrowed their brows and pouted their lips, it means that they are angry. Or if the eyes are wide, the eyebrows are raised, and the mouth is stretched, it means fear. Etc.

Customers of companies can use these technologies in a variety of ways, from surveillance systems that will look for threats by recognizing 'angry' faces to hiring programs that will weed out bored and unmotivated job seekers.

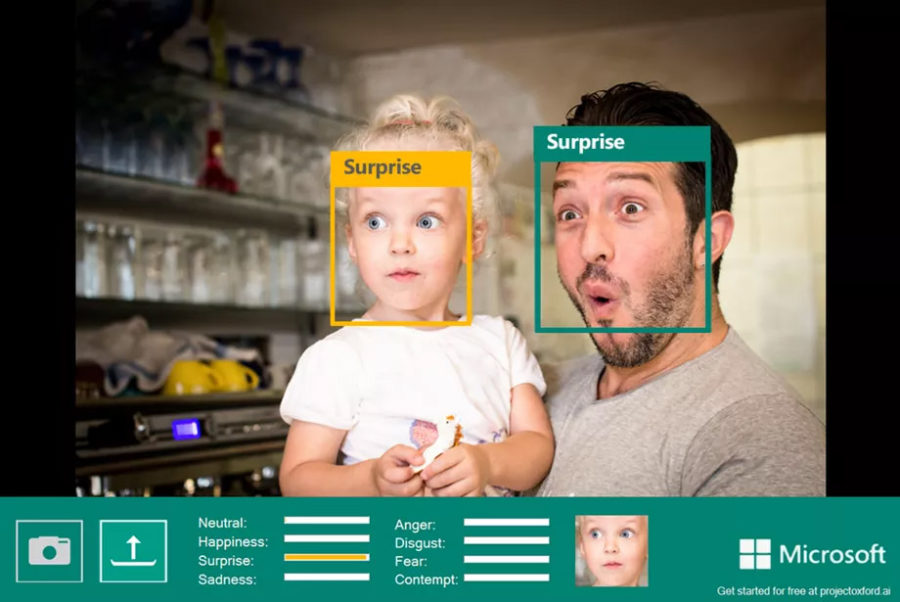

Many tech companies sell algorithms that promise to reliably detect emotions based on just a specific person's facial expressions. Source: Microsoft

However, the belief that we can easily draw conclusions about the human condition based on visual data is controversial, and, according to the report on new research in this area, there is no scientific basis for it.

“Companies can say whatever they want, but the data is clear,” says Lisa Feldman Barrett, professor of psychology at Northeastern University and one of the five study authors. “You can recognize that a person is frowning, but that is not the same as recognizing anger.”

The study was commissioned by the American Psychological Society, and five scientists were asked to thoroughly analyze what appears to be obvious. Each scientist represented a 'camp' in the field of emotion research. “We weren’t sure we would come to an agreement, but it turned out that way,” says Barrett. It took two years to study the data, and the report covered the results of over a thousand different studies.

The research results are very detailed, they can be found in full here, but the general idea is that there is a very wide range of ways to express emotions, which makes it difficult to determine how a person feels based on a simple set of facial movements.

“On average, when people are angry, they frown less than 30% of the time,” Barrett says. “So frowning is not an expression of anger, but just one of many. That is, more than 70% of the time, angry people do not frown, moreover, they often frown without being angry. '

This, in turn, means that companies that use AI to gauge human emotion are misleading customers. 'Would you like the results to be measured this way? Barrett asks. – Are you ready for the fact that in court, when hiring, at the airport, the algorithm worked correctly only in 30% of cases?

The study does not deny that common, 'typical' facial expressions may exist. Nor does it deny that our belief in the communicative role of facial expressions is of great importance in society (do not forget that seeing a person in person, we get much more information about his emotions than a primitive facial analysis gives us).

The report identifies that there is a wide variety of beliefs in the field of emotion studies. One of them, in particular, is refuted by researchers, is the idea of a reliable 'imprinting' of emotion through facial expressions. This theory is rooted in the work of psychologist Paul Ekman in the 1960s, which the scientist later developed.

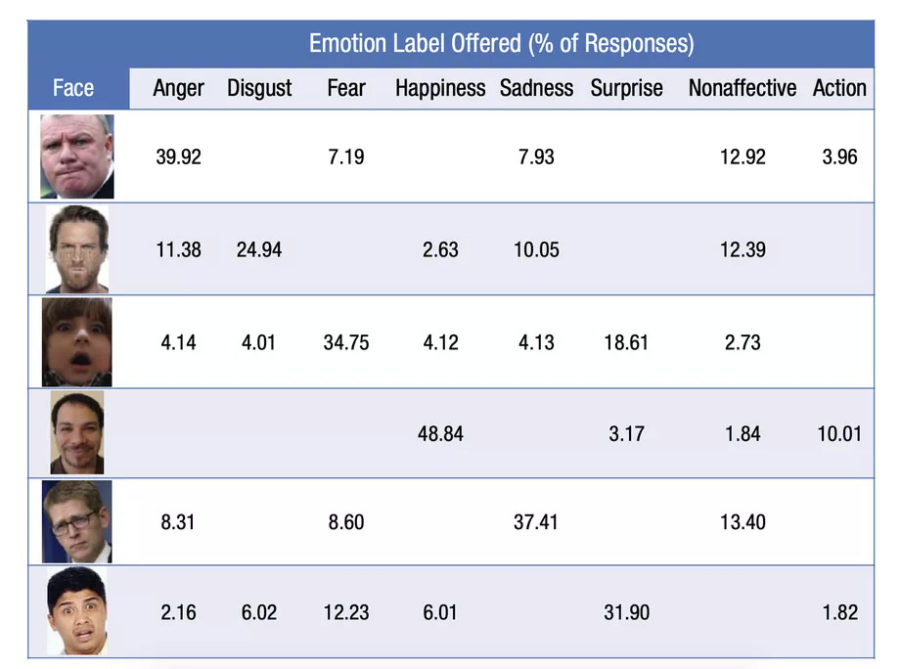

Studies that show strong correlations between certain facial expressions and emotions are often based on the wrong methodology, the report says. For example, they employ actors who use exaggerated facial expressions as a starting point for displaying emotions. And when asked to label these expressions, subjects are often presented with a limited choice of emotions, which leads them to some degree of agreement.

When people are asked to describe emotions on their faces and are not given a choice from specific options, their answers, as the graph shows, vary significantly.

“People intuitively understand that emotion is a much more complex thing,” Barrett notes. “When I tell people:“ Sometimes you scream in anger, sometimes cry with anger, sometimes laugh, and sometimes sit silently and plan to destroy your enemies, ”this is convincing to them. I say, “Listen, when was the last time anyone won an Oscar for frowning with anger?” Nobody thought it was cool acting. '

However, such nuances are rarely taken into account by companies that sell emotion recognition tools. In its marketing description of the algorithms Microsoft, the company says that the benefits of AI enable their software to “recognize eight key emotional states … based on universal facial expressions that reflect those states,” which is exactly what the report refutes.

Barrett and her colleagues have warned for years that our model of emotion recognition is too primitive. In contrast, the companies that sell these tools often say that their analysis is based on more signals than just facial expressions. The tricky part is how these signals are balanced, if at all true.

One of the leaders in the $ 20 billion emotion recognition market, Affectiva, says it is experimenting with collecting additional metrics. For example, last year it launched a tool that is supposed to measure the emotions of drivers based on facial and speech analysis. Other scientists are developing metrics such as gait analysis and eye tracking.

In a statement, Affectiva CEO and co-founder Rana El Kaliubi said the report by Barrett and her colleagues was “very in line” with the company. “Like the authors of this report, we don't like the naivety of the industry, which is stuck on six basic emotions and the direct relationship between facial expressions and emotional states,” says El Kaliubi. “The relationship between expression and emotion is a very subtle phenomenon, complex and not prototypical.”

Barrett is confident that in the future we will be able to more accurately measure emotions through more sophisticated metrics. But this will not stop the spread of current limited methods.

In machine learning, we especially often see metrics that are used to make decisions – not because they are reliable, but simply because they can be applied to measure. It's a technology that excels at finding relationships that can lead to all sorts of false analyzes, from looking up social media posts posted by a babysitter to determine her 'mood' to analyzing corporate transcripts to predict stock prices. Often, even the very definition of artificial intelligence shrouds the phenomenon with an undeserved aura of reliability.

If emotion recognition becomes commonplace, there is a danger that we will simply accept it and begin to adjust to the method's shortcomings. Now people can predict how different algorithms will perceive their actions on the network (and, for example, not like posts with children, so as not to get ads for diapers later). And in the future, we may start to portray exaggerated facial expressions, knowing how machines interpret them. And they will not be particularly different from what we will demonstrate to other people.

Barrett says that perhaps the most important takeaway from the talk is that we need to start thinking of emotions as more complex. Expression of emotions is diverse, complex and situational. She compares the need for change to Charles Darwin's exploration of the nature of species, to how his work overturned simplistic representations of the animal kingdom.

'Darwin realized that the biological category of a species does not have a single essence, it is a category that unites extremely diverse individuals. This is absolutely true in the emotional sphere as well. '

While scientists are questioning the reliability of existing algorithms, they are being widely implemented in real life, including in our country. Do you think it is legitimate to use such an imperfect technology, for example, in banks or when applying for a job? So far, it turns out that the constant supervision of Big Brother is only half the trouble, and the real problem is in the interpretation of the information collected about you and me.